About 18 months ago, we were doing impressive demos.

An agent writes a component live on screen. An agent fixes a bug in 30 seconds. Everyone in the room goes, "wow." Then the meeting ends and a human engineer goes and actually finishes the work. 😆

We were at Gen 1.5. Better than autocomplete, not yet production-capable. We were using AI to go faster on individual tasks, not to fundamentally change how we build systems.

What we do now is different. Not incrementally different, but structurally different.

Last week I shipped a full admin dashboard for Tickt, an Australian marketplace connecting businesses with service providers. 21,799 lines of TypeScript. 270 files. Auth flows, 12+ modules: organizations, disputes, payments, revenue, push notifications, full user management with dispute resolution dialogs, commission tier settings, GST config.

Production-ready. Deployed. Five days.

And before that, a different client: infrastructure overhaul. Existing cloud architecture analyzed, Docker containers security-hardened, costs cut by 40%+. Same timeframe window. Same methodology.

This newsletter is about the gap between the impressive demos and those outcomes. What we actually had to invest, build, and change to close it.

The honest reason demos aren't production

Demos work because they're supervised. You're watching the agent. You know what it's supposed to do. You catch the wrong move before it compounds.

Production doesn't work that way. In production, the agent is running a 10-phase build. It's making thousands of micro-decisions across hundreds of files. A wrong assumption in Phase 2 creates a bug you don't find until Phase 7. A gap in the context creates a component that looks right but doesn't match the API contract.

For a long time, our answer was to just keep a senior engineer very close to the agent at all times. That's not really agentic engineering. That's pair programming with extra steps.

The real shift happened when we stopped asking, "How do we make agents faster?" and started asking, "What does an agent actually need to do to make no wrong guesses?"

The investment that made it real

First thing we did: gave the entire team Claude Max subscriptions.

Not just the seniors. Everyone. Every engineer, every PM who touches a spec.

This sounds obvious, but it wasn't. There was a real moment where we had to decide if we believed this enough to invest across the whole team, not just let a few people experiment. The answer had to be yes. You can't build a team that thinks and operates agentically if half of them are rate-limited, using different tools, or treating it as optional.

The second thing: we stopped treating agentic development as a productivity hack and started treating it as a discipline with its own methodology, its own skill set, and its own failure modes.

That meant building the harness. Not just running agents, but building the infrastructure that makes agents reliable.

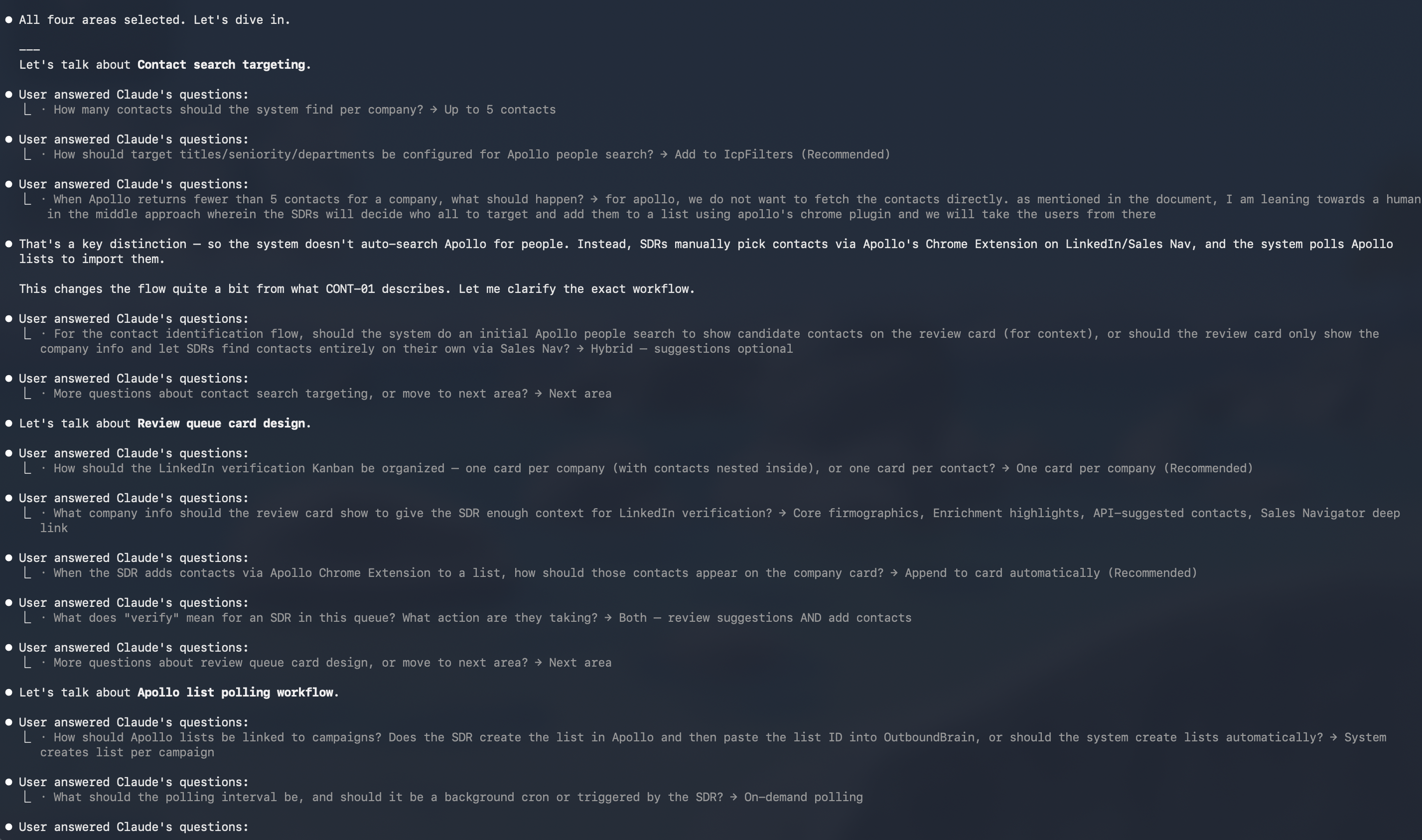

Barrage of questions using the GSD framework

What the harness looks like in practice

CLAUDE.md

Every project starts with a CLAUDE.md committed to the repo. Not a README. Not documentation for humans. A persistent context file that travels with every agent, every subagent, and every session throughout the entire project lifecycle.

For Tickt, the CLAUDE.md defined the stack (Next.js 16, Tailwind 4, shadcn/ui), the API proxy pattern, the folder structure, the naming conventions, the auth flow, the TypeScript strictness level. When an agent started a new phase, it read the constitution first. No guessing. No drift.

Skills

Skills are reusable capability files. Each one encodes the accumulated knowledge from every time we've done a thing before — what breaks, what patterns work, what edge cases bite you.

We have skills for creating production Word docs, Excel sheets, PDFs, presentations. For Tickt specifically, we built a skill for ‘filterable data table with side panel.’ We needed that pattern eight times across different modules. The first time, an agent figured it out. Every subsequent time, it loaded the skill and skipped the figuring-out step entirely.

For the infra client, we built a skill encoding Docker security best practices: base image selection, no running as root, layer hygiene, secret handling, and multi-stage builds. Every container got the same standard applied consistently, by the same agent, from the same encoded knowledge.

Hooks

The enforcement layer. Claude Code hooks let you run shell commands at lifecycle points: before a tool executes, after it completes, and when a session ends. We use them to:

Auto-commit after each phase completes (the git history becomes a progress log)

Validate TypeScript before an agent marks a task done: if it doesn't compile, the task isn't done

Send a Slack notification when a long-running build finishes

Block certain operations unless the phase spec exists and is complete. Like deleting your production databases 🫠

This is where most teams stop short. They get agents running fast and call it done. The hooks are what turn "fast and occasionally chaotic" into "fast and systematically controlled."

Spec-driven phases

On Tickt, we mapped ten phases before writing a line of code. Phase 1: foundation. Phase 2: auth. Phase 3: core modules. Each phase had a plan document defining what "done" meant, what the component tree looked like, what the API endpoints were, and what the edge cases needed handling.

The agent reads the phase plan. It executes against it. It doesn't invent scope.

This is spec-driven development taken seriously: the spec is the product, and the code is the output.

Two projects, same playbook

Tickt: greenfield product build. Five days. 21,799 lines. The build ran like a factory because the blueprint was done before the factory opened.

There were rough edges — TypeScript strict mode violations that needed a cleanup pass, 15+ Cloudflare deployment commits when the config kept losing env vars, a Socket.IO integration we added and ripped out two days later when we realized it was over-engineered. But those were implementation bugs, not design bugs. The architecture held.

Infra client: no product, no components. Just an existing cloud setup that needed work. Same methodology applied to a completely different surface. Spec first (what are we auditing, what does "better" mean, what are the constraints). Skills for Docker hardening. Hooks to validate that changes actually improved the security posture before signing off.

Result: 40%+ cost reduction, hardened containers, a deployment setup that follows least-privilege.

What the two engagements proved is that the methodology isn't tied to product development specifically. It's a general framework for agentic execution. You can point it at infrastructure, at testing, at documentation. Anywhere you need consistent, systematic, production-quality output.

What this means for the team

I've been watching the skill shift happen inside SoluteLabs for 18 months now.

The engineers who adapted fastest weren't the ones who learned the most tools. They were the ones who got better at the thing agents genuinely can't do on their own: defining what "done" looks like before the work starts.

Spec-writing is a learnable skill. But it's different from code-writing. It requires thinking through edge cases before you encounter them, defining data contracts before you build the UI, writing a sentence like "the dispute resolution dialog should close the panel optimistically but roll back on API failure," and knowing exactly why that phrase needs to exist before the agent touches the feature.

The developers who struggle in this model are the ones who used to figure it out as they went. That instinct [comfortable, fast, experienced] doesn't translate to agentic execution. Agents can't figure it out as they go. They need the plan.

The bottleneck moved.

It used to be: how fast can we write this?

Now it's: how clearly can we define this?

Where we are now

We've been running at Gen 3: [plan → execute → verify] for about a month on production projects. 80%+ of the code on new engagements is AI-generated. Not prototypes. Not internal tools. Production systems are shipped and maintained.

The question I'm sitting with now is where this breaks. What class of problem still requires a human in the execution loop, not just the design loop?

I have guesses. I'll share them when I have data.

If you're a CTO, engineering lead, or technical founder thinking about how to actually make this transition, not just demo it, I'd genuinely like to compare notes on what worked and what didn't.

PS: I will be in Australia for the coming 2 weeks talking to founders and builders; if you’re around and available, I would love to chat.

Reply to this. I read every one.

About me

Working from the Mountains

Karan Shah: Engineer turned Founder

15 years ago, I started my career as a software engineer. Took the entrepreneurial plunge with less than 5 years of work experience.

Since then, I’ve strived to work at the intersection of Product Engineering, Design, Marketing, and Sales.

I’ve had the pleasure to work with some of the fastest-growing startups and large enterprises alike. From creating MVPs and clients raising funds to large enterprises going for an IPO!

Brew. Build. Breakthrough.

Karan Shah

Founder & CEO, SoluteLabs

Building AI-native products before it became cool.