Last weekend I spent a full day, and then some, vibe-coding an AI agent.

No team, no sprint, no ticket. Just me, Claude Code, and a question I'd been sitting on for months: is SoluteLabs' positioning actually landing?

I want to be upfront about the "full day and then some" part, because I think the way people talk about agentic development online sets unrealistic expectations. You see posts that suggest you can describe a problem at 9 a.m. and have a finished product by lunch. That hasn't been my experience, and I don't think it's most people's experience either.

What actually happened: I had to think carefully about which data sources to connect and why. I had to structure the problem clearly before the agent could do anything useful with it. I had to iterate on the approach when early outputs weren't quite right.

And I had to trust a framework (more on that in a moment) to handle the parts that would've caused the whole thing to fall apart if I'd just winged it.

The agent I ended up building pulls from GA4 for traffic and user behavior, Google Search Console for the actual queries bringing people to the SoluteLabs website, and DataforSEO for keyword gaps and competitor signals. It cross-references all three, looks for patterns and contradictions, and produces a detailed 10,000+ line report on exactly how we should be repositioning our content and messaging.

A consultant doing the same work would have taken weeks. I've paid for that before, more than once. This task took a full day of focused effort and a fraction of the cost. (Had to upgrade to the Claude Max plan and still had to pay for the API that did the analysis lol) .

That's still an extraordinary compression of time and money, even when you're honest that it wasn't effortless.

Why It Worked: The Framework Behind the Build

Here's what I think most "vibe-coding" posts miss. The reason the agent produced something useful, rather than the inconsistent, half-finished output that gives vibe-coding a bad rap, is that I used a framework called Get Shit Done (GSD).

GSD is an open-source meta-prompting and context engineering system built specifically for Claude Code. It currently has 12,800 GitHub stars, and after spending a full day with it, I understand why.

The core problem it solves is something called context rot. As you work through a long Claude Code session, quality gradually degrades. The context window fills up with the history of everything you've discussed, leaving less and less room for Claude to actually think. You start sharp and end up with inconsistent work, forgotten decisions, and an agent that seems to lose the plot halfway through.

GSD fixes this problem by structuring your work into a clean loop: discuss your intent and constraints upfront, plan the work in atomic steps with XML structure, execute each step in a fresh 200,000-token context window, then verify the output before moving on. The heavy lifting [research, planning, parallel execution] all happens in subagent contexts. Your main session stays lean and responsive throughout.

Practically, this means that you can walk away while the agent works. This is not always the case, and it requires clear upfront thinking. But once the context is right and the plan is set, the work genuinely happens while you're doing something else.

The Six Things That Actually Make This Work

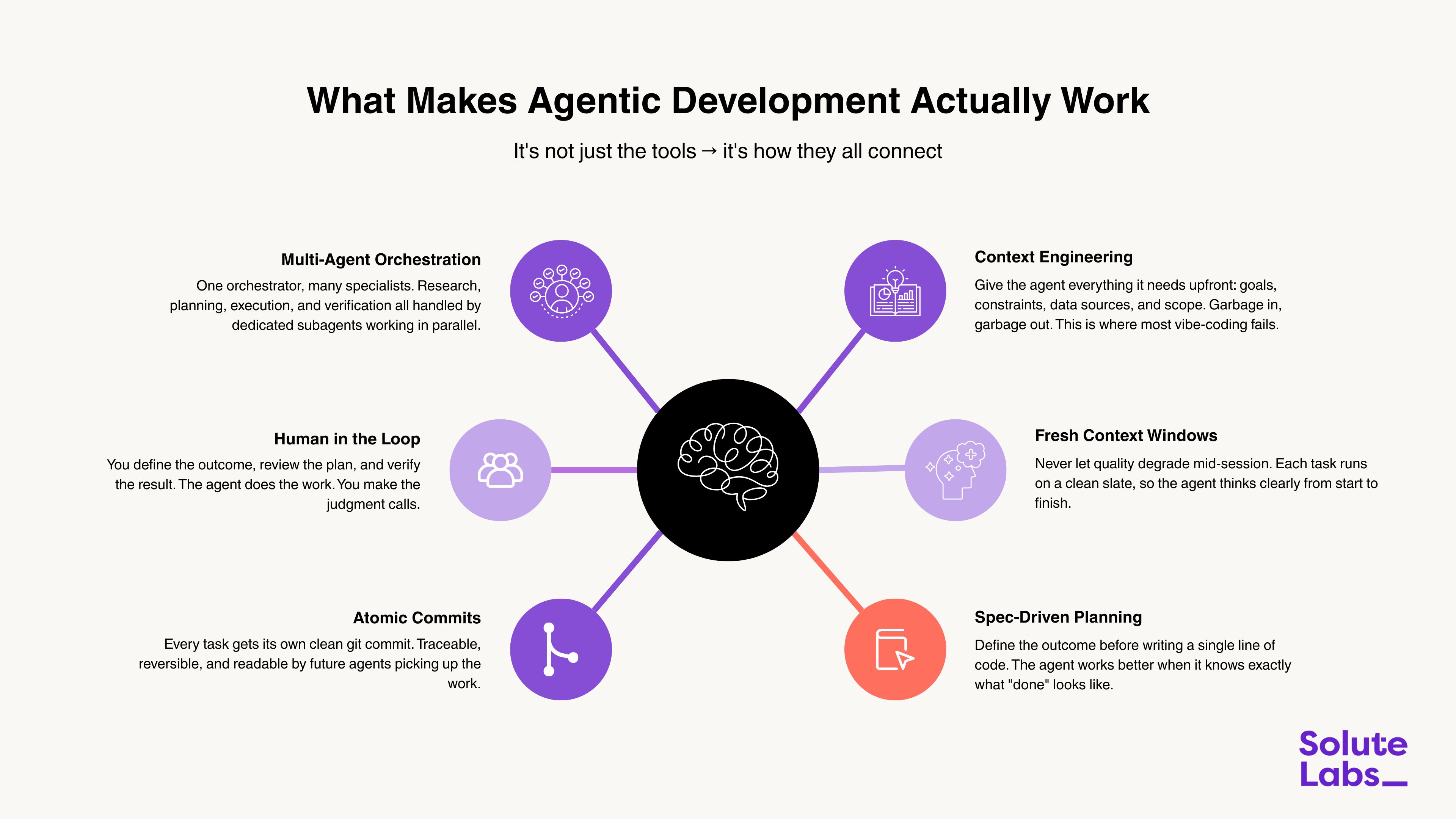

After the build, I sat down and tried to articulate what the difference is between agentic development that produces something useful and agentic development that produces a mess. The graphic below is what I came up with. I think it applies whether you're building a weekend agent like I did or running a production AI system for a client.

The six elements are worth unpacking briefly because they're not obvious until you've experienced what happens when one of them is missing.

Context engineering is where most vibe-coding breaks down. If you haven't given the agent a clear picture of your goals, constraints, data sources, and what "done" looks like, you'll get output that technically answers the question you asked rather than the question you meant. The agent is only as smart as the context you give it.

Fresh context windows, handled automatically by GSD, solve the quality degradation problem. Each task starts with a clean slate. This sounds like a small implementation detail, but it has an enormous effect on output consistency over a long build.

Spec-driven planning means defining the outcome before writing a single line of code. This is counterintuitive for people used to iterative development, but agents work far better when they know exactly what done looks like rather than figuring it out as they go.

Multi-agent orchestration is what turns a single capable agent into a system. One orchestrator coordinates the work; specialist subagents handle research, implementation, and verification in parallel. You're not running one AI agent, you're running a small team of them!

Human in the loop is the one people sometimes want to skip, and it's the most important. You define the outcome. You review the plan. You verify the result. The agent does the work between those checkpoints. Removing yourself from that process is where things go wrong.

Atomic commits. Every task gets its own clean git commit. It sounds like a developer hygiene point, but it's actually about future-proofing. A clean Git history means future agents (and future you) can understand exactly what happened and why. It also means you can revert one task without losing everything else.

What This Means for How We Build at SoluteLabs

The Saturday agent project wasn't a one-off experiment. It was the same philosophy we've been applying across SoluteLabs for the past couple of years, just turned inward on our own business.

We started where most teams start: using AI to write code faster. We implemented Copilot, improved autocomplete, and reduced boilerplate. That felt significant at the time. But agentic development is a categorically different thing. You're not asking AI to help you do a task. You're defining the outcome, connecting the right inputs, and letting the agent determine the path. The work happens in the background.

At SoluteLabs, our sales research is agentic. Our content analysis is agentic. The way we qualify leads, enrich prospect data, and personalize outreach all have agentic layers running underneath them.

And increasingly, when we start a new client engagement, the first question we ask isn't "what do you want to build?" → it's "what in your current workflow should a human not be doing at all?"

That shift in the question changes everything about the answer.

The Honest Version of the Pitch

I'm not writing this to make agentic development sound easy. It took me a full day and then some to build something I'm genuinely proud of. It required clear thinking, the right framework, and a willingness to iterate when early outputs weren't right.

But the leverage is real. A consultant doing that same positioning analysis would have taken weeks. The output I got was detailed, specific, and actionable in ways that generic strategy advice rarely is. And now that the agent exists, running it again next quarter takes a fraction of the original effort.

That's the honest version of what agentic development feels like right now. It's not magic. It's a new kind of leverage: one that rewards people who think clearly about outcomes and invest in getting the context right.

That's a skill worth building in 2026.

Why I'm Back (And What I've Been Up To)

If you subscribed a while back and then wondered what happened to me. I owe you an explanation.

Life happened, honestly. The last few months were a mix of personal milestones and the kind of commitments that quietly consume every spare hour you think you have. My brother Rahi got married, which was one of the most joyful things I've been part of in years and also one of the most all-consuming. Between the planning, the celebrations, and just being fully present for something that only happens once, everything else took a backseat. And I don't regret that for a second.

There were a few other personal commitments layered on top of that too. These commitments are of a nature that is difficult to schedule around and can't be partially completed. So the newsletter went quiet, and I made peace with that.

But here's what's interesting: even while I was away from writing, I kept building. The ideas were stacking up in my head during those months, about where AI development is actually heading, about what agentic systems feel like when you live inside them, and about the gap between what people say about AI and what's actually true. Those didn't go anywhere. If anything, the time away gave me more to say rather than less.

So this isn't just a comeback issue. It's the start of what I think will be the most interesting chapter of Brew. Build. Breakthrough, yet. Because the things I've been building and thinking about over these past few months are genuinely worth talking about.

Glad to be back. Let's get into it.

About me

Working from the Mountains

Karan Shah: Engineer turned Founder

15 years ago, I started as a software engineer and took the entrepreneurial plunge with less than 5 years of experience.

Since then, I've been building at the intersection of product engineering, design, and AI. From zero-to-one MVPs to clients raising funds and going for an IPO.

Today, SoluteLabs is 11 years old, and this newsletter is where I think out loud about all of it.

Brew. Build. Breakthrough.

Karan Shah

Founder & CEO, SoluteLabs

Building AI-native products before it became cool.